My Favorite Algorithm: having a chat with my friends

On LLMs & why getting good answers is probably making us worse at asking good questions

Exactly a year ago, I wrote an essay here about conceptual containers: terms like “computing work,” “discipline,” or “race” that we use to organize, communicate, and navigate social reality. I argued that we argue about "fairness" not because we disagree that it matters, but because I'm thinking about karma and you're thinking about restorative justice: we're holding different parts of the same concept and assuming the other person is holding the same part. Since writing it, this problem has become a daily feature of my work with LLMs, where I'll ask one to explain a concept back to me just to check whether we're actually conceptualizing the same thing—and I often don't catch misunderstandings until later.

Like humans, LLMs default to conceptual containers. That causes confusion. But unlike humans, current models do not reliably initiate the work of calibrating understanding with you on certain topics. In this essay I explain how our only hope for meaningfully understanding our world full of complex social phenomena is explorative dialogue.

What is a social concept?

A social concept is a shared idea we use to interpret, coordinate, and argue about human life; its meaning depends on people, context, social systems, traditions, and other moving parts. As a result, it’s not possible to “look up” an overview of a social concept the same way we do an objective fact. This is because the meaning of a social concept is an amalgamation of many things: who is speaking, who is listening, what the stakes are, when and where in history we are talking about.

So, concepts like “equity”, “rigor”, “professionalism”, and “healthy relationship” do not have a single meaning, but are packaged assemblies of perspectives, motivations, values, norms, needs, and desires. When we use one of these words, we intend to take the parts of it that we find useful, but language doesn’t really let us do that.

I believe this is why conversations on these topics can feel so loaded. In some cases, we actively quarantine them from conversations because we know they are landmines for frustration.

Analogy: The Computer Parts Problem

There was a point in the 2000s that we could buy individual Macbook RAM sticks. Today Macs have them soldered to the rest of the computer. This is also the case for other mac parts: if one part fails you can’t replace it by itself, you replace the whole logic board. The parts still exist in theory, but functionally they do not because you can’t separate them.

I think social concepts behave in a very similar way. The component parts of a concept exist, but we only have one word for the whole assembly. So if we want to explain that concept to someone else, the most efficient way to do it is to show them the whole assembly. But if they don’t know what specific part you’re wanting them to have, they won’t know to do the work of deconstructing it, which may involve asking you for examples, follow up questions, context, and triangulating what you might mean based on things they know you would say or would never ever say.

LLMs don’t ask “What do you mean by that?”

If a friend came to me and asked, “What does a healthy relationship look like?”, I wouldn’t start by answering. I’d start by asking why they’re asking. Did something happen? Are they trying to evaluate a situation they’re in right now? Are they trying to recover from something? Or maybe they’re trying to avoid repeating a pattern?

Then I might share what I’ve learned from my own relationships and what I’ve observed in other people’s. But I’d also be really explicit that “healthy relationship” is not one thing. It’s an assembly. There are components that matter differently depending on the person, the relationship stage, the culture, and what’s actually at stake.

I’d read their facial expression and be afraid that was an overwhelming answer. And so then I’d explain that I think it’s kind of like a healthy workout routine or diet. There are general principles, but people aren’t dealing with the same deficits. Some people need more structure. Some need more softness. Some need to learn to speak. Some need to learn to stop negotiating with someone who has no incentive to understand them. And what you need changes as you get older.

When I ask an LLM the same question, I get a very different kind of response:

Or this:

These answers aren’t wrong necessarily. They are, however, very different from what I get from or give to my friends. The model doesn’t ask what I ask my friends first: it doesn’t ask me what made me ask, what I’m trying to decide, or what kind of “healthy” I mean. It doesn’t ask for stories or share stories with me that it thinks could help me paint a more colorful picture of what health could look like. It responds as though “healthy relationship” is a fully assembled concept, and then asks follow up questions or offers additional perspective or advice.

I love talking to my friends about relationships because those conversations are where I realize what I’m missing. Sometimes I realize I was asking the wrong question entirely; and then, over time, I stop thinking about relationships as “good” or “bad” and start seeing components: attachment styles, financial obligations, communication habits, repair, alignment on values, or power dynamics.

My more honest perception of relational health didn’t come from reading a list of signs. It came from years of conversations, storytelling, and difficult follow-up questions. When my friends and I debate about relationships, we realize we mean slightly different things by “healthy” or “happy” or “intentional”. And when we do this, we get SO much more than we came for. I get the chance to learn something new about them, about myself, and about relationships. And then this new information then changes what I start noticing in romance movies or reality TV shows, how I interpret others’ experiences, and how I respond when somebody else approaches me with a similar question.

The Compression Problem

The problem with social concepts is fundamentally one of compression. Words like “fairness,” “value,” or “rigor” are shortcuts for hundreds of possible scenarios, value hierarchies, and contextual judgments. When I say “fairness,” I might be pointing to equal distribution, or proportional distribution, or need-based distribution, or procedural transparency, or historical redress—and which one depends entirely on what real-world situation I’m trying to describe. The word itself doesn’t tell you. It’s a pointer to a vast space of possibilities, not a fixed referent.

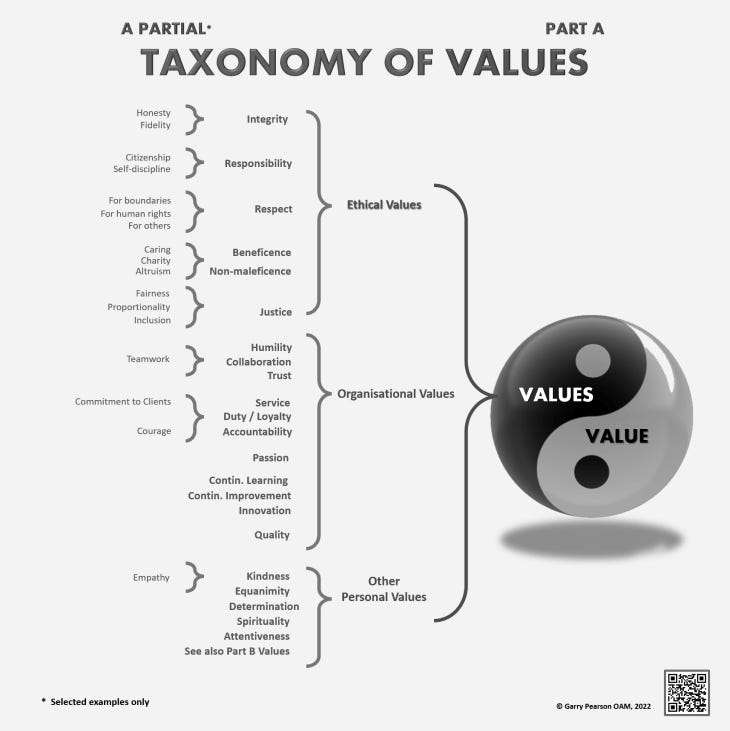

One of my favorite (most frustrating) examples of this is the social concept of “value”. The word should never be allowed to be used alone because there are so many things wrapped up in it:

This is where the difference between talking to friends and talking to LLMs becomes structural, not just preferential. The difference between how my friends and LLMs approach social concepts isn’t just about quality of answer. It’s about what happens to my thinking.

When an LLM gives me a comprehensive answer about a social concept, I get information. When my friend asks me what I mean first, I get something else: I’m forced to articulate which component of the concept I’m actually asking about. And often, I discover I didn’t know.

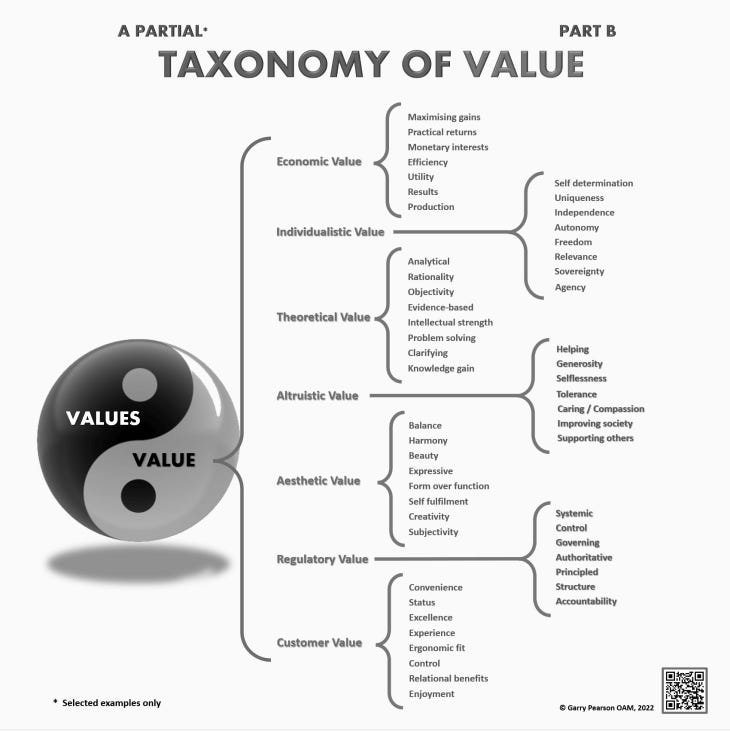

That discovery process (where I have to explain what I mean, hear myself say it, watch my friend’s confused face, try again, get asked a follow-up question I wasn’t expecting, realize I’m talking about something slightly different than I thought) is where I actually learn to think more clearly about social concepts. It’s where I move from having a vague sense of “fairness” to being able to distinguish between distributive fairness, procedural fairness, and restorative justice.

Conversation disentangles and decompresses social concepts

Conversation is the decompression algorithm for social concepts. It's the only reliable process we have for taking an overloaded, context-dependent term and iteratively narrowing down to what we are actually referring to in a specific case. When you ask me "what do you mean by fairness?" and I give an example, and you might say "wait, what about this other case?" and I then revise my response. That back-and-forth is how we traverse from the container to its contents.

LLMs can describe social phenomena. They’re so good at this. They can generate examples and then can elaborate beautifully on what a social concept typically means while acknowledging that there’s nuance. But they don't yet reliably do what conversation does: force both parties to keep unpacking and storytelling until we've identified which specific real-world patterns, relationships, or outcomes we're actually talking about. Without that forcing function, we stay at the level of the container — articulate and comprehensive, but ultimately still very compressed.

My Favorite Algorithm

LLMs, as they currently work, can accelerate many kinds of learning. But they may actually slow down one particular kind: the kind of learning where we figure out how to unpack concepts by being forced to defend it, clarify it, and revise it in real-time, with someone who doesn’t automatically understand you.

When you discuss a complex topic with an LLM, the calibration work (i.e. the “mmmmm wait, what did you mean by that?” work) is still mostly on us.

If we aren’t careful, we could start to forget that social concepts are assemblies at all. We already do this; the limitations of an LLM can only make it worse.

The container, no matter how well-described, is not the thing itself. And getting to the thing itself, the actual, specific, lived reality that someone is trying to put words to (lord help us), requires the algorithm we’ve had all along. The one where you ask me what I mean, and then I fumble through an explanation, and then you look confused… so I try again. You still think that’s not it but you see where I’m going and share a story that reframes everything. I say “yes, yes exactly” and together we slowly, painfully, beautifully, figure out what we’re actually talking about.

Yeah, that’s my favorite algorithm.

- KUE

Appendix (a couple more, non-relationship, examples):

Example 1: What Makes Good Teaching?

Two faculty members are locked in a heated debate about what “good teaching” really means.

One says it’s simple: good teaching is when students master the material. The other fires back: no, good teaching is when students grow as thinkers.

They spend an entire meeting talking past each other. They’re both right. They’re just pointing at different parts of the same container.

“Good teaching” is not one thing. It’s an assembly of distinct components:

Knowledge transmission: can students actually understand and retain the material?

Mentorship: are students growing as scholars and as people?

Assessment design: can you measure what they really learned, not just what they memorized?

Classroom management: can you build a room where learning can actually happen?

Intellectual modeling: are you showing what expert thinking looks like in real time?

Accessibility: can all kinds of students genuinely access and engage with the material?

Until we see the whole assembly, we’ll keep arguing about which single piece “really” counts. These components matter differently by context. Good teaching in a large intro lecture is not the same as good teaching in a graduate seminar. Good teaching for pre-med students emphasizes different components than good teaching for philosophy majors. If they’re lucky, one of their colleagues finally says, “I think we mean different things by ‘good teaching.’” They unpack the assembly, see that they value different components, and can then productively discuss which ones matter most in their context.

This is hard for humans. We often don’t realize we’re using the same word for different bundles until we’ve already wasted time and energy arguing. Recent research I’ve been doing at Tech has made me worry about the implications for language-based reasoning models. Consider this scenario:

A graduate student asks an LLM, “What makes good teaching?”

The model answers with characteristics like clarity, engagement, assessment, and rapport. This sounds completely reasonable; Chat seems to be using semantic context to frame its response. Lovely!

BUT.

Chat (as of Jan 2026) can’t help a student see that good teaching is made of components whose importance depends on context. It cannot say, “For your course, students, and institution, assessment design is probably most important because you must prepare them for standardized exams.”

In an ideal scenario, a human mentor that knows the student decently well can. They can ask questions, identify which part of teaching the student needs help with, and break down the concept together.

Example 2: “Equity”

An organization holds a contentious meeting about equity in hiring. Some see equity as treating everyone the same. Others see it as correcting historical exclusion. Others see it as proportional representation. They use the same word but mean very different things, so the meeting stalls.

“Equity” is an assembly of:

Equal treatment (same process for everyone)

Equal access (removing participation barriers)

Equal outcomes (representation proportional to population)

Compensatory justice (correcting historical exclusion)

Recognition (valuing diverse expertise and experience)

Distributional fairness (allocating opportunities by need or merit)

These components can conflict: equal treatment can preserve unequal access, while equal outcomes can require unequal treatment. Members clash because they prioritize different parts of the concept.

If they step back and break it down, they can talk more productively: “Given our organization’s history, our industry’s demographics, our legal constraints, and our values, which aspects of equity matter most to us now?” These conversations are genuinely hard for humans and often fail. When they succeed, it’s because people unpack the container together and identify what they care about.

Now the organization asks an LLM, “How should we think about equity in our hiring process?”

The model can discuss equity, provide definitions, suggest practices, and use contextual cues from the question to shape its response.

BUUUT!

It cannot help the organization understand that equity is an assembly with components that sometimes conflict. It cannot facilitate the conversation about which components matter given their specific organizational context, political environment, legal constraints, and stakeholder pressures.

A human consultant can do this work (big asterisk: but only if they bring strong domain expertise, a solid grounding in statistics, and the ability to teach and communicate clearly. They must also understand the organization’s specific context in depth, including its internal politics. With this combination of skills), they can help the organization break equity down into its component parts and determine which ones matter most in their particular situation.